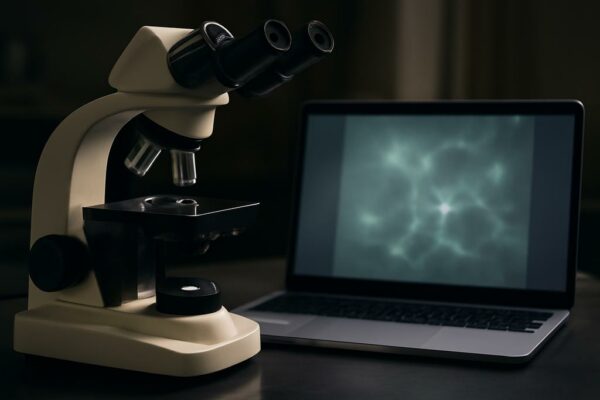

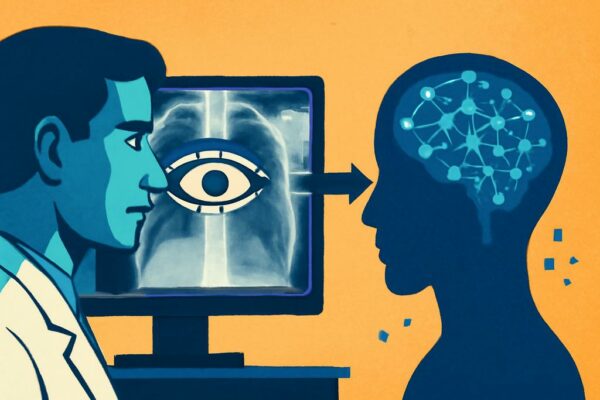

AI Now Sees What Doctors See: Can It Guess Their Next Move?

Imagine a world where artificial intelligence doesn’t just diagnose illness from medical images, but anticipates the very thought processes of the doctor reviewing them. This isn’t science fiction; a recent study from researchers at the University of Arkansas, University of Liverpool, University of Houston, and MD Anderson Cancer Center is pioneering a new frontier in…